|

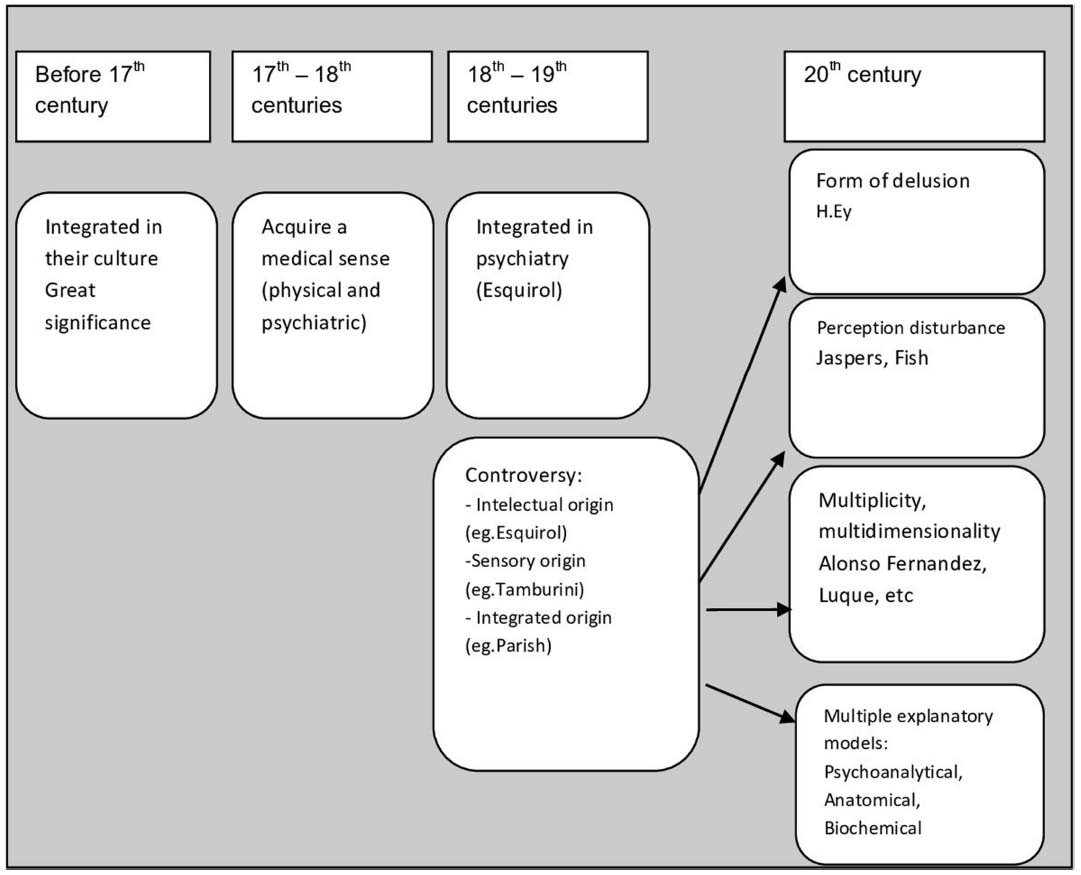

This brings us back to the distinction between perceptual and active inference. Having said this, models of perceptual hallucinosis and false inference may not be apt to explain the hallucinations seen in psychosis. It is these cells that are thought to encode Bayesian representations that generate top-down predictions. These synthetic examples of aberrant perceptual inference fit comfortably with the predictive coding formulation, in the sense that most psychedelics act upon serotonergic neuromodulatory receptors in deep pyramidal cells. It is worth noting here that such beliefs mean that prediction errors are informative not only about one’s degree of surprise but also about the origin or nature of an input: perceptions generated by external sources are more surprising than those generated by oneself and perceptions generated by external agents are more unpredictable than those that are non-agentic (for a fuller discussion of this, see [ Crucially however, this conclusion rests upon precise prior beliefs about sensory precision or the amplitude of prediction errors, namely, ‘ large amplitude prediction errors can only be generated by things I can’t predict’. The consequence of these failures is the false inference that I was not the agent of these sensations. A failure to suppress (covert or overt) sensory prediction errors in this setting can be due to a failure of sensory attenuation, or it can be due to a failure to generate accurate predictions (i.e., corollary discharge). Note that this formulation is consistent with both sides of the story. Therefore, the only plausible hypothesis that accounts for these prediction errors is that someone else was speaking. Now, these large amplitude prediction errors themselves provide evidence that it could not have been me speaking otherwise, they would have been resolved by my accurate predictions. This would induce large amplitude prediction errors over the consequences of that speech. Intuitively, imagine that I failed to generate an accurate prediction of my inner speech. Lateral interactions (horizontal arrows) mediated within-layer predictions about the precision of priors (black) and prediction errors (red). These cells then provide descending priors that inform prediction errors at the lower level, instantiating the computations that remove the expected input, leaving prediction errors to be assimilated or accommodated, depending on their precision (depicted by the balance, hypothesized to be implemented via slower neuromodulators such as dopamine, acetylcholine, and serotonin, depending on the particular inferential hierarchy). Posterior expectations are encoded by the activity of deep pyramidal cells. Sensory input is conveyed via ascending prediction errors in superficial pyramidal cells. Here, we sketch the hierarchical message passing thought to underlie predictive coding and expand on the details of between- and within-layer computations. People with hallucinations have strong/precise perceptual priors that are not present in psychotic patients who did not hallucinate and who, indeed, may have weak/imprecise priors [įigure 1 An Inferential Hierarchy. There are even data suggesting that psychotic individuals with and without hallucinations use different priors to different extents in the same task. We propose that these un-attenuated (or un-explained away) prediction errors induce a particular sort of high-level prior belief that is the hallucination. When illusions are not perceived by patients with schizophrenia, it could be that they fail to attenuate sensory precision, enabling prediction errors to ascend the hierarchy to induce belief updating.

In Figure 1, for example, the precision of priors and prediction errors at the sensory level are relatively balanced. It is possible that illusions could fail at low levels of processing and hallucinations could be generated as a response, higher in the hierarchy, as suggested by the work with deep neural networks (see below). This work underlines the continuum from normal to aberrant perception, encouraging a more empathic approach to clinical hallucinations. Data from neural networks, human behavior, and neuroimaging support this contention. Appreciating the interactions within and between hierarchies of inference can reconcile this apparent disconnect. We consider these observations in light of work demonstrating apparently weak, or imprecise, priors in psychosis.

Recent empirical work from independent laboratories shows strong, overly precise priors can engender hallucinations in healthy subjects and that individuals who hallucinate in the real world are more susceptible to these laboratory phenomena.

Here, we highlight the role of prior beliefs as a critical elicitor of hallucinations. Hallucinations, perceptions in the absence of objectively identifiable stimuli, illustrate the constructive nature of perception.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed